|

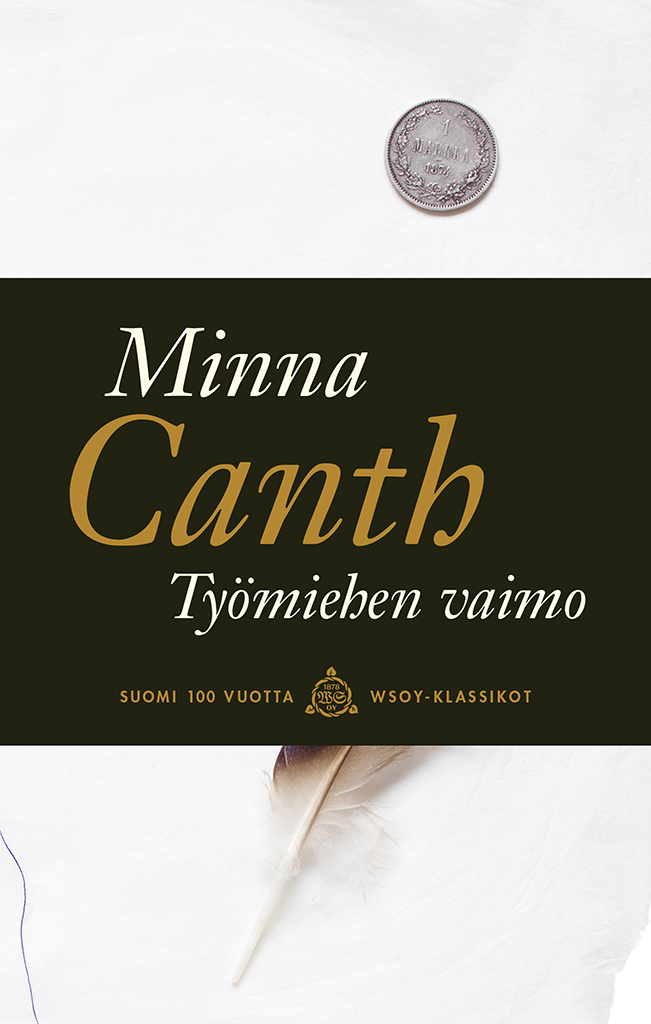

7/8/2023 0 Comments Tyomiehen vaimo

Extensive experiments are conducted on VOC-LT and COCO-LT datasets, which demonstrates that the proposed method significantly surpasses the previous state-of-the-art methods and zero-shot CLIP in LTML. Furthermore, taking into account the class imbalance, the distribution-balanced loss is adopted as the classification loss function to further improve the performance on the tail classes without compromising head classes.

Specifically, LMPT introduces the embedding loss function with class-aware soft margin and re-weighting to learn class-specific contexts with the benefit of textual descriptions (captions), which could help establish semantic relationships between classes, especially between the head and tail classes.

performance synchronously on both head and tail classes. In this work, we propose a unified framework for LTML, namely prompt tuning with class-specific embedding loss (LMPT), capturing the semantic feature interactions between categories by combining text and image modality data and improving the. Long-tailed multi-label visual recognition (LTML) task is a highly challenging task due to the label co-occurrence and imbalanced data distribution.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed